So, I finally had some time this week to get back onto Google Maps, and it’s been pretty good thus far. I started by trying to figure out how to pull images out of WebKit’s spiderweb. Sadly the WebResourceLoadDelegate never actually provides you with the response data, so you have to go querying the WebDataSource for the main frame… anyway, it’s a roundabout way, but it works. Anyway, the next problem is that WebResources only return their contents as an NSData instance, and classdump assures me there’s no magic private category method to help out with that. D’oh.

So, I started looking at ways to get a usable image representation from an NSData. The three approaches I looked at were:

NSImage: using-initWithData:, but what does it actually expect from the data?CIImage:+imageWithData:, but same as above, and the documentation seems to imply the parameter is the raw bitmap data, not file data..CGImage, viaCGImageSource:CGImageSourceCreateWithData()can be given a UTI of the expected image type, which can be obtained usingUTTypeCreatePreferredIdentifierForTaggiven the MIME type which is available from theWebResource.

Now, ultimately this all goes into an OpenGL view, so CIImage isn’t actually much use since it doesn’t seem to have any methods for getting data back out. CGImage is probably the best; it seems to be what others are using in relation to OpenGL stuff. But it’s relatively straightforward to get a CGImageRef from an NSImage, so I can worry about that later if need be.

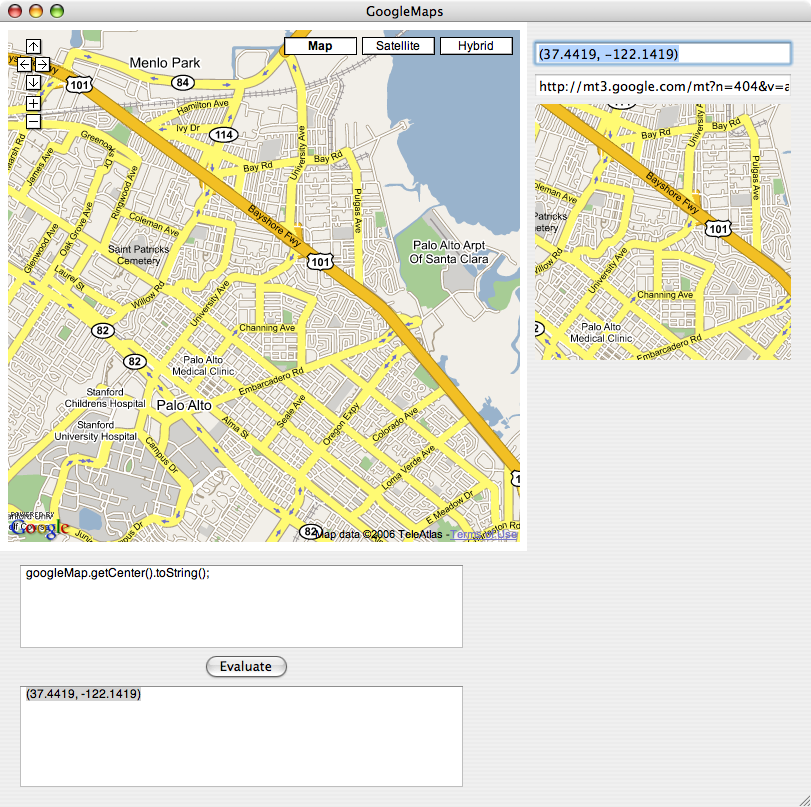

For the time being, the most straightforward solution was to try out NSImage first. And miraculously enough, it works. So now, as demonstrated below, I can obtain the image for any particular lat/long I like.

The next step is to build a proper class to cache these images and GPS metadata.